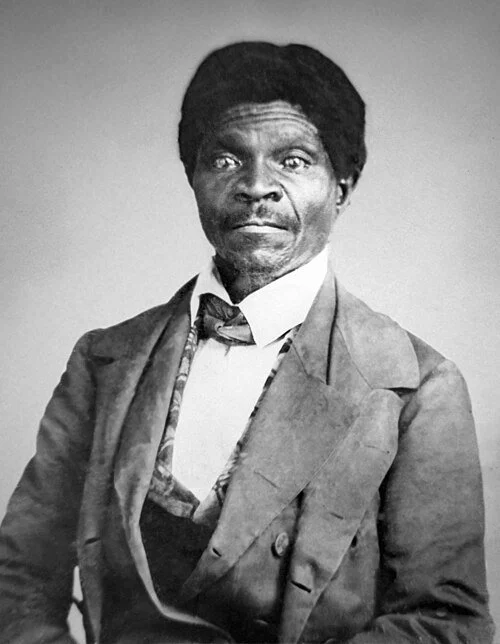

Who Was Dred Scott?

Dred Scott was an escaped slave who sued for his freedom and that of his wife, Harriet, and their two daughters. Scott and his wife claimed their freedom because they had lived in Illinois and the Wisconsin Territory for four years as free blacks; in these states, slavery was illegal and the state laws said that slaveholders gave up their rights if they (escaped slaves) stayed for an extended period. When his owners brought him back to Missouri, Scott sued for his freedom: first in Missouri state court, which ruled that he was still a slave under its law, and then in US federal court, which ruled against him, deciding that it must apply Missouri law. He ultimately appealed these rulings to the US Supreme Court. The legal case that forms the basis of Dred Scott v. Sandford (1857) is, in fact, a collection of lawsuits initiated in Missouri courts that ultimately reached the U.S. Supreme Court.

The main plaintiff was Dred Scott, a previously enslaved individual who had been living as a freed man. The defendant was Irene Emerson and subsequently John F. A. Sandford (the widow of Scott’s owner and her brother). Scott filed a lawsuit for his freedom in the Missouri state court, asserting that he had been taken by his owner into free jurisdictions where slavery was prohibited. Scott had lived for extended periods in areas where slavery was prohibited by law: Illinois (1833–1836) was a free state under Illinois law and Wisconsin Territory (1836–1838) which was governed by the Northwest Ordinance and later the Missouri Compromise, both of which prohibited slavery. Scott contended that his residence in these free regions rendered him legally free, as Missouri had previously acknowledged the principle that residing in a free jurisdiction emancipated an enslaved person.

His full background was crucial to the case. He was born a slave around 1799. In 1818, Scott was taken by Peter Blow and his family to Alabama. In 1830, the family abandoned farming and relocated to St. Louis. Scott was sold to Dr. John Emerson, a surgeon in the U.S. Army. One unresolved controversy is whether Blow passed away before or after the sale was completed. Scott was dissatisfied with Dr. Emerson and attempted to escape, but was eventually recaptured. In 1833, Emerson moved to Illinois, a free state, and in 1837 to the Wisconsin Territory, which was also free. There, he met his wife, Harriet, who was also enslaved, and they married, a union that was not legally recognized among slaves; this fact became another point of contention. Harriet was subsequently sold to Emerson. They had two sons. In 1840, Emerson returned to Missouri, a slave state, and he died in 1842, with the Scotts being inherited by Emerson’s wife, Irene. In 1846, Scott offered Irene $300 to buy their freedom, but she declined; this is another noteworthy aspect, as it demonstrated he possessed his own funds from his labor. In 1846, Mr. Scott sued for his freedom, arguing that living in free states and territories for prolonged periods of time had made him and his wife free.

Scott first sued for freedom in St. Louis Circuit Court. Initially, Scott won his freedom at trial in 1847. On appeal, the Missouri Supreme Court reversed the decision in 1852, ruling that residing in a free territory did not automatically emancipate him (Scott v. Emerson (15 Mo. 576). Scott remained enslaved under Missouri law. Meanwhile, Dred, Harriet and their family lived in Wisconsin. The question was raised whether or not the cases should be heard there, not Missouri, which made it unclear which, if either, state jurisdiction had standing in the case.

The cases then moved to Federal Court In 1853, Dred Scott sued John F. A. Sandford, who had inherited him, in federal court in St. Louis. The Blow family supported Scott’s case financially. Scott argued he was a U.S. citizen and thus could invoke diversity jurisdiction under Article III of the Constitution (allowing a citizen of one state to sue a citizen of another state in federal court). In lower federal courts, he continued to lose but remained living freely in Wisconsin. The federal courts initially split on jurisdiction. Scott appealed to the US Supreme Court in 1856, 10 years after his initial suit. He had been living on his own all of that time.

The Dred Scott Decision

Legal scholars have traditionally considered the Dred Scott Decision the worst SCOTUS decision in history. Several reasons exist for its poor status in antebellum legal jurisprudence, including the merits of the legal case, its overreaching opinion beyond the case itself (obiter dicta), its inhibition of further legislative compromise on the slavery issue, and its direct influence in precipitating the Civil War. A full comprehension of the legal issues it raised and the catastrophe of its dreadful racial pronouncements are necessary to appreciate its appalling and shocking conclusions. In this regard, it may actually be far worse than the infamous Cornerstone Speech because it supposedly reflected the rule of law.

On March 6, 1857, Chief Justice Roger B Taney read his opinion, speaking for the majority. The United States Supreme Court decided 7–2 against Scott, finding that neither he nor any other person of African ancestry could claim citizenship in the United States, and therefore Scott could not legally bring suit in federal court. On behalf of the majority, Taney ruled that because Scott was considered the private property of his owners, he was subject to the Fifth Amendment, prohibiting the taking of property from its owner "without due process". Hence, Scott's “temporary residence” outside Missouri did not bring about his emancipation, as it would "improperly deprive Scott's owner of his legal property".

This ruling appeared to be in conflict with the provisions of the Missouri Compromise, passed in 1820 that admitted Missouri as a slave state, in exchange for legislation which prohibited slavery north of the 36°30′ parallel except for Missouri. Taney noted that the Compromise's legal provisions was intended to free slaves who were living north of this borderline in the western territories. Taney decided that this requirement constituted the government depriving slaveowners of their property. Therefore, the Missouri Compromise, which had kept the peace for 37 years, was determined to be unconstitutional, even though this finding was unnecessary to the decision in the case. It was also actually moot, because the provisions of the Missouri Compromise forbidding slavery in the former Louisiana Territory north of the parallel 36°30′ north were effectively repealed in the Kansas–Nebraska Act of 1854.

The decisive elements of the verdict were the determination that slaves were legally designated as property, and that black people could never be US citizens, even if freed as recognized by state laws. Taney’s ruling meant that a man could be recognized as a citizen of a state but not a citizen of the country. But Taney continued: he ruled that slavery could not legally be excluded from U.S. territories or states because it is recognized in the Constitution.

Minority Opinions

Justice McLean argued that by ruling that Scott was not a citizen, the Court had also ruled that it did not have jurisdiction to hear his case. Consequently, McLean contended that the Court should have simply dismissed the case without further judgment. He attacked much of the Court's decision as obiter dicta (literally: by the way) and thus an opinion that is not legally binding.

Justice Curtis objected to the accuracy of the majority’s historical data, noting that Black men were allowed to vote in five of the thirteen states of the Union at the time of the ratification of the Constitution. He viewed the right to vote as evidence that Black men were already citizens of both their states and of the United States. To argue that Scott was not an American citizen, Curtis wrote, was “more a matter of taste than of law.”

Taney directly ruled that state citizenship did not accord African-Americans the rights of national citizenship. This seemed to directly contradict Article IV, section 2: "The citizens of each State shall be entitled to all privileges and immunities of citizens in the several States."

Free Blacks were given full citizenship and voted in some states - but not allowed on juries. There was no precedent to be a state citizen and not a US citizen. Such a conclusion leads to highly questionable justice. A Black person could, in theory, sue in their own state (for example, Pennsylvania) if an illegal seizure occurred within that state’s borders. Once the person was taken across state lines, state courts lost jurisdiction, and it became a federal case. But that raised a problem if the plaintiff was a black person who wasn’t a citizen of the country. No one had any idea what to make of this but it appeared as if the free black person had no recourse, and hence there was no remedy for kidnapping across state lines.

Dred Scott and his family lost the case but remained free. The Scott’s were purchased by the owner’s sons, who sold them to Taylor Blow, who manumitted them in May 1857. A year later, Scott died of tuberculosis. Harriet lived for another 19 years as a free woman in St Louis, dying in 1876. Many people, both then and now, believe that both Scott, who could have just escaped, and the owner, Sandman, saw this as a test case and there was never really any plan to re-enslave them.

Binding Rulings (Ratio Decidendi) versus Obiter Dicta (Not Necessary to the Decision)

The only binding legal ruling in Dred Scott was that Black people are not citizens of the United States, which meant that Blacks, whether free or enslaved, had no standing to sue in federal court. This conclusion was necessary to decide the case — the Supreme Court dismissed Scott’s federal lawsuit on this basis. Dred Scott had no right to sue in federal court because he was not a citizen. Scott could not invoke diversity jurisdiction under Article III of the Constitution.

A central legal principle is that a ruling should be based on legal principles and not give opinions about extraneous questions. Taney went out of his way to give opinions that had nothing to do with Mr. Scott’s suit. These statements were not required to resolve Scott’s claim but became infamous for their political and legal implications:

1. Congress cannot prohibit slavery in federal territories.

2. The Missouri Compromise of 1820 was unconstitutional, because Congress had no authority to ban slavery in the territories. Reason: Slaveholders’ property rights are protected by the Fifth Amendment.

3. Slaves are property under the Constitution.

4. The Constitution protects slave owners’ property rights across state lines.

5. Assertions that Blacks were “beings of an inferior order… so far inferior that they had no rights which the white man was bound to respect.”

Despite its immense controversy and broad rejection throughout the North, the Dred Scott decision stood as the prevailing law of the land. Those portions of the decision that were central to the decision, like any SCOTUS ruling, are binding law. The question raised was, were the obiter dicta opinions enforceable? Several states passed laws contradicting the ruling, a form of nullification. Central to the ruling was the Court’s declaration that Congress lacked the power to ban slavery in federal territories. This point, which was not strictly necessary to resolve Dred Scott’s specific case (and thus, technically, "obiter dictum"), had immense political consequences. Although such commentary was not legally binding, Taney and his allies intended it as an authoritative reading of the Constitution. Southern leaders and Democrats quickly treated this territorial claim as settled constitutional doctrine, insisting that slavery was lawful in all federal territories.

Taney’s Flawed Legal Reasoning

The Constitution does not explicitly exclude free Black individuals from citizenship. In fact, at the time of its drafting, several states recognized free Blacks as citizens, granting them rights such as voting and property ownership. Moreover, Black individuals contributed to the nation’s founding; for instance, Crispus Attucks, a Black man, was a casualty of the Boston Massacre, and many others served in the Continental Army. These historical realities directly undermine Chief Justice Taney’s claim that the framers unanimously viewed Black people as outside the political community.

Likewise, Taney’s assertion that Congress lacked authority to restrict slavery in federal territories disregarded both historical precedent and established law. The Northwest Ordinance of 1787—subsequently reaffirmed by the First Congress—prohibited slavery in the Northwest Territory. The Missouri Compromise of 1820 similarly regulated the expansion of slavery and had long been accepted as a valid exercise of congressional power.

Taney’s opinion, therefore, was not compelled by the Constitution—it was a partisan, pro-slavery interpretation that projected into the document values which were never explicitly present. The dissenting justices underscored this point, arguing that both citizenship and the regulation of territories were matters traditionally left to congressional and state authority. Many scholars consequently regard Dred Scott as a paradigmatic instance of judicial activism—a case in which the Court inserted itself into a contentious political debate, expanding the reach of slavery instead of addressing only the case at hand. Thus, while Taney’s decision may have reflected the prevailing political influence of slaveholders, it was neither required by law nor morally defensible, even within the context of the antebellum Constitution.

Legal Consequence for Black Americans

The excerpts presented in table 2 demonstrate the harsh language employed by Taney and the majority. Upon examining these statements, the ramifications of Taney’s decision are appalling. This ruling signified that Black individuals were denied acknowledgment as citizens of the United States and would indefinitely remain in that condition. Dred Scott, along with all subsequent Black plaintiffs, was prohibited from filing lawsuits in federal court, nor could they pursue legal actions if the issue crossed state lines, leaving them completely powerless due to their race and status as former slaves. Additionally, slaves are categorized as property protected under the Fifth Amendment, meaning they cannot be taken from their owners without permission. The Constitution was interpreted as institutionalizing the denial of rights based on skin color, a circumstance that cannot be undone. The ruling expressed that the natural or inherent rights of individuals to life, liberty, and the pursuit of happiness were never intended to apply to Black individuals. As a result, Black people are not considered fully human and lack legal equality with white individuals. Simply living in free territory or being independent for a prolonged period would not provide sufficient grounds for their emancipation.

The legal consequences were disastrous. Blacks would have no rights that whites are required to honor. This ruling denies Blacks, whether free or enslaved, the ability to stand in federal court. If no Black individual can ever file a federal lawsuit, they are left utterly powerless. Black individuals would be susceptible to federal crimes committed against them, as they cannot seek justice in a federal court.

The Fifth Amendment

Taney's majority opinion highlighted that enslaved individuals were regarded as property, which meant that the federal government could not intervene in a slave owner's right to transport their property into any territory. The 5th Amendment states that no individual can be deprived of life, liberty, or property without due process of law, nor can private property be taken for public use without just compensation. Taney’s opinion was based on the 5th Amendment's protection of property, explicitly referencing the Due Process Clause. This legal reasoning necessitated two conditions: 1) that slaves possessed none of the natural rights of men, and 2) that the US Constitution legally classified them as property.

Taney contended that enslaved individuals were legally recognized as property under both state and federal law (as safeguarded by the Constitution’s acknowledgment of slavery in provisions such as the Fugitive Slave Clause and the Three-Fifths Clause). Consequently, if Congress enacted a law—such as the Missouri Compromise—that prohibited slavery in federal territories, it would be unconstitutional as it deprived slaveholders of their property (enslaved individuals) without due process of law. He stated: “An act of Congress which deprives a citizen of his property merely because he brought his property into a particular Territory of the United States … could hardly be dignified with the name of due process of law.”

Taney reasoned that enslaved individuals were a type of property recognized by law. Slaveholders were “citizens” entitled to protections under the Fifth Amendment. Thus, any federal law prohibiting slavery in a territory infringed upon property rights without due process. If slaves were considered persons, they could not be deprived of their liberty without due process of law. However, if slaves were deemed “property,” then masters could not be deprived of their property without due process. From the viewpoint of 1789, the only reasonable interpretation of the 5th Amendment was to conclude that slaves were legally not persons.

There was legal precedent for this interpretation. Justice Story said (in the majority opinion of Prigg vs Pennsylvania 1842), ”We have said that the clause (article 4 section 2) contains a positive and unqualified recognition of the right of the owner in the slave, unaffected by any state law or legislation whatsoever, because there is no qualification or restriction of it to be found therein, and we have no right to insert any which is not expressed and cannot be fairly implied … The owner must, therefore, have the right to seize and repossess the slave., which the local laws of his own state confer upon him as property ….”

The Tenth Amendment

The Tenth Amendment to the United States Constitution had implications for slavery that were profound but are often misunderstood. It provides that powers not delegated to the United States by the Constitution, nor prohibited to the states, are reserved to the states respectively, or to the people. Before 1857, this language reinforced a widely accepted constitutional arrangement: slavery was regulated by state law. Because the Constitution granted Congress no general authority over slavery within existing states, regulation of the institution inside state borders was understood to belong to each state. Massachusetts could abolish slavery; South Carolina could entrench and expand it. Congress, as a rule, did not interfere with slavery where it already existed under state authority.

But the Tenth Amendment did not protect slavery everywhere. It reserved undelegated powers to the states; it did not retract powers that were expressly delegated to Congress. Among those enumerated powers was authority over federal territories under Article IV, Section 3—the Territorial Clause. Under that authority Congress had governed slavery’s status outside the states, most notably through the Northwest Ordinance, which prohibited slavery in the Northwest Territory, and later through the Missouri Compromise. Congress also exercised its commerce power to end the international slave trade in 1808. None of these measures were thought, prior to 1857, to violate the Tenth Amendment, because they operated in areas where federal authority was textually grounded.

Pro-slavery constitutional theorists frequently blurred this distinction. They argued that because slavery was a “state right,” it carried a kind of constitutional immunity wherever Americans went. That reading converted a rule about reserved powers into a claim of national protection for slavery, something the Constitution nowhere explicitly declared. Properly understood, the Tenth Amendment meant that slavery inside a state depended on that state’s law. It did not mean that Congress was powerless in the territories, nor that slavery enjoyed automatic constitutional shelter beyond state borders. The constitutional struggle before the Civil War centered precisely on where state authority ended and federal authority began. Slavery stood at that fault line.

The Supreme Court’s decision in Dred Scott transformed that equilibrium. Taney held that enslaved persons were property protected by the Fifth Amendment’s Due Process Clause and that Congress therefore lacked authority to prohibit slavery in the territories. In practical effect, this reasoning nationalized slavery. What had previously been treated as a matter of state law within states and federal discretion in territories became, under Taney’s logic, a constitutionally protected property right that Congress could not exclude from federal soil. The earlier balance: state control locally, congressional control territorially, was overturned. The Tenth Amendment’s reservation of powers to the states remained textually intact, but its practical significance for containing slavery was gutted.

The Political Rise of Abraham Lincoln

It was at this moment that Abraham Lincoln made his decisive political move. Before Dred Scott, most Americans accepted that states controlled slavery within their borders while Congress governed it in the territories. The Court’s decision shattered that arrangement by declaring that neither Congress nor territorial voters could bar slavery’s expansion. Lincoln grasped immediately that this logic placed slavery on a trajectory toward nationalization. In his “House Divided” speech, he warned that the country could not endure permanently half slave and half free because the constitutional barriers to slavery’s spread were being dismantled.

Lincoln’s brilliance lay not merely in opposing slavery but in explaining how the Court’s reasoning reordered the Constitution itself. By locating slavery’s protection in the Fifth Amendment and denying Congress authority in the territories, the Court rendered the earlier federal-state balance effectively meaningless. Lincoln translated that constitutional disruption into a moral and democratic argument ordinary voters could understand: self-government was being made powerless. If majorities in the territories could not decide the status of slavery, then “popular sovereignty” was a façade. By exposing that contradiction, Lincoln built a coalition that understood the crisis not simply as a sectional dispute but as a threat to constitutional democracy. His capacity to connect technical constitutional reasoning with a compelling public narrative is central to his enduring stature in American political history.

Conclusion

The consequences of Dred Scott were devastating. By denying Black Americans citizenship, the Court stripped them of access to federal courts, leaving free Blacks defenseless against kidnapping across state lines. By constitutionalizing slave property, it placed slavery beyond democratic control. If Congress could not regulate slavery in the territories, then neither could settlers. The institution was no longer local—it was being made national.

In trying to settle the slavery question, the Court destroyed the constitutional middle ground. The nation was now forced to choose: slavery would either expand everywhere or be stopped by political resistance. Abraham Lincoln saw the crisis and articulated its impact before anyone else: a political opening he grasped.

The site has been offering a wide variety of high-quality, free history content since 2012. If you’d like to say ‘thank you’ and help us with site running costs, please consider donating here.